Welcome back to A Little Wiser. We hope everyone had a lovely weekend. Thanks for all the great feedback on our editions last week! Today’s wisdom explores:

How to Hack Your Mind With the Placebo Effect

The Fermi Paradox: Where Is Everybody?

How Keynes Rewrote the Rules of Economics

Grab your coffee and let’s dive in.

PSYCHOLOGY

🧠 How to Hack Your Mind With the Placebo Effect

After treating World War II soldiers who felt little pain despite devastating injuries, anesthesiologist Henry Beecher realized the mind could influence the body in ways that defied the medical science of his time. His paper, The Powerful Placebo, documented case after case of patients improving because of what medication they believed they had been given. What that research has since revealed is that the placebo effect is not a trick, a misperception, or a statistical nuisance to be controlled for. The placebo effect is a real biological mechanism, capable of triggering measurable changes in brain chemistry, hormone levels, immune response, and cardiovascular function. In a previous edition we explored the nocebo effect, the dark mirror of this phenomenon, where negative expectations produce real physical harm. The placebo effect is its more hopeful counterpart, and the evidence for what it can do is striking.

In a landmark study published in Psychological Science, the psychologist Alia Crum at Harvard told one group of hotel housekeepers that their daily cleaning work met the surgeon general's recommended levels of physical exercise, while a control group was told nothing. Four weeks later, the informed group showed measurable reductions in blood pressure, body fat, and waist-to-hip ratio despite no change in their actual physical activity. The only variable was the story they had been given about the work they were already doing. A study by Draganich and Erdal, published in the Journal of Experimental Psychology, found that participants randomly told they had enjoyed above average REM sleep scored significantly higher on cognitive performance tests than those told their sleep had been below average, regardless of what their sleep had actually been like.

What makes all of this practically useful is that the effect does not require deception to function. Crum's research on stress, published in the Journal of Personality and Social Psychology, showed that reframing stress as a performance enhancer rather than a threat produced measurable reductions in cortisol in real time. The brain responds to the meaning it assigns to an experience as much as to the experience itself, and meaning is something a person can consciously shape. This is not a call to think positively in the vague, effortful way that self-help books tend to recommend. The thought you choose to have about your body, your sleep, your stress, your capacity for a given task is within measurable biological limits, an input into them. Once you understand that the brain prioritizes meaning over raw data, you realize that reframing your experience is the most direct way to master it.

Study by Draganich and Erdal, published in the Journal of Experimental Psychology

ASTRONOMY

🔭 The Fermi Paradox: Where Is Everybody?

In the summer of 1950, the physicist Enrico Fermi was walking to lunch at Los Alamos with a group of colleagues, talking about a recent cartoon in the New Yorker depicting aliens, when he asked a question that has not been satisfactorily answered in the seventy years since: where is everybody? The question sounds casual, almost comic, but it carries inside it one of the most unsettling problems in all of science. The universe is approximately thirteen and a half billion years old. It contains somewhere between two hundred billion and two trillion galaxies, each containing hundreds of billions of stars, a significant proportion of which have planets orbiting them. The mathematics of these numbers suggest that the galaxy should be teeming with civilizations, some of them billions of years more advanced than our own. And yet we have detected nothing.

The first category of explanations suggests that intelligent life is simply far rarer than the numbers seem to imply. The biologist Ernst Mayr argued that the evolution of intelligence is so improbable, requiring such a precise and unlikely sequence of developments, that it may have happened only once in the observable universe. The paleontologist Peter Ward and astronomer Joe Kirschvink developed this into what they called the Rare Earth hypothesis. They argued that the specific conditions that allowed complex life to develop on Earth, a large moon stabilizing our axial tilt, a Jupiter-sized planet absorbing asteroid impacts, a planet in exactly the right orbital position, are so uncommon that simple microbial life may be widespread across the universe while complex, intelligent life is vanishingly rare.

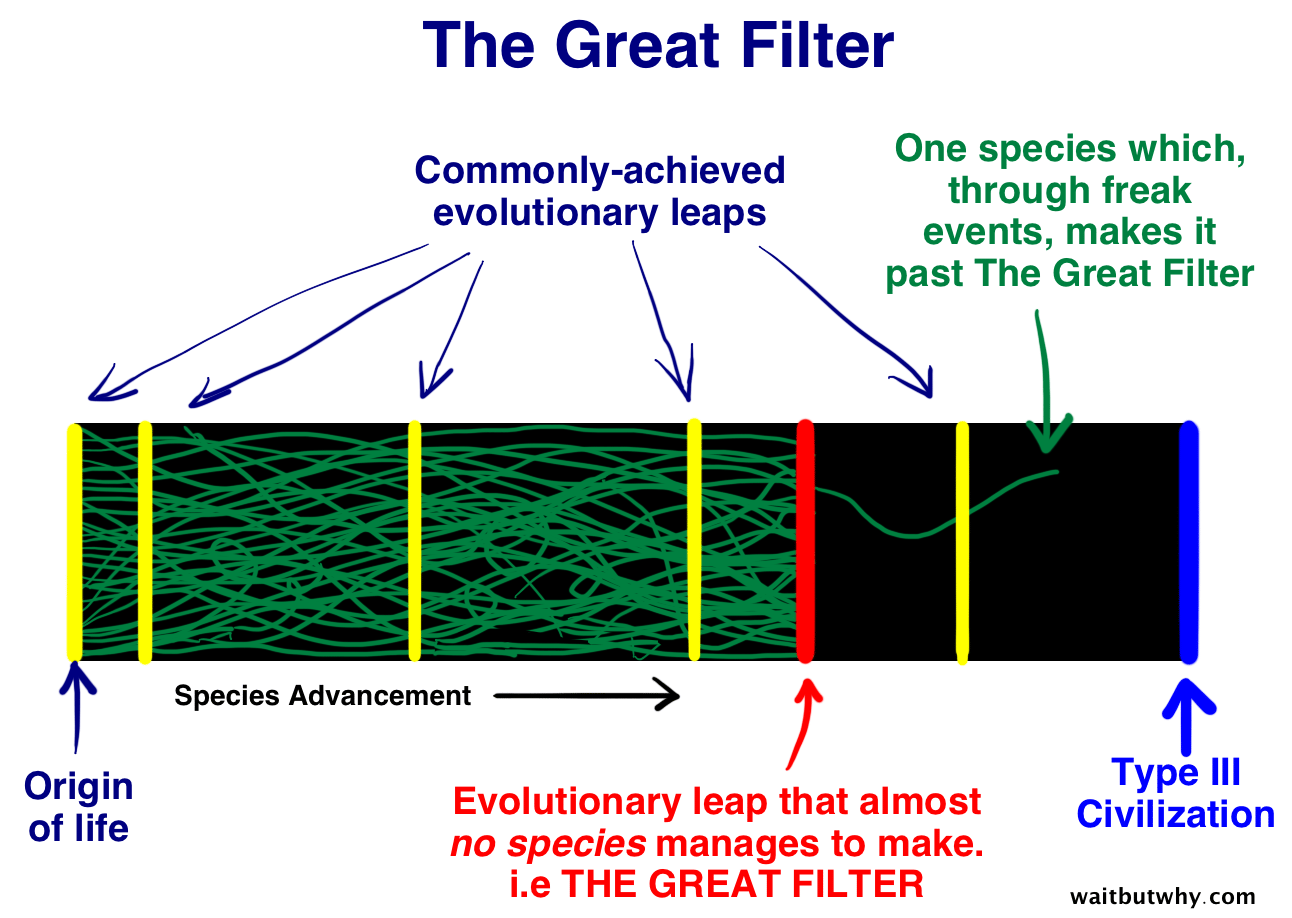

The explanations in the second category are considerably harder to sit with. The Great Filter hypothesis, developed by the economist Robin Hanson in the 1990s, proposes that somewhere between the formation of a planet and the development of a spacefaring civilization, there is a barrier so difficult to cross that almost no species makes it through. The deeply uncomfortable question this raises is whether that filter lies behind us or ahead. If the "wall" is in our past, it means we’ve already cleared a hurdle that stops everyone else. Maybe the spark of life is incredibly rare, or the jump from single cells to complex animals is a one-in-a-trillion fluke. If the "wall" is in our future, it means life starts easily, but advanced civilizations always hit a dead end. Perhaps they inevitably destroy themselves with nuclear war, climate collapse, or runaway AI before they can leave their home system. This suggests that every civilization reaches our level of technology, fails a final test, and vanishes. Fermi asked his question over lunch and reportedly seemed satisfied with whatever answer his colleagues gave him at the time. The rest of us have been considerably less able to let it go.

ECONOMICS

💰 How Keynes Rewrote the Rules of Economics

For most of the nineteenth century, the dominant assumption in economics was that markets, if left alone, would naturally correct themselves. If unemployment rose, wages would fall until it became cheap enough for businesses to hire again. If demand collapsed, prices would drop until people started buying. The system was self-regulating, and the appropriate role of government was to balance its budget, stay out of the way, and wait. Then the Great Depression arrived. Between 1929 and 1933, the American economy contracted by roughly a third, unemployment reached twenty-five percent, and the self-correcting mechanism that classical economics promised showed no sign of operating.

John Maynard Keynes was a British economist at Cambridge who had been watching the Depression with mounting frustration at the inadequacy of the tools available to understand it. In 1936 he published The General Theory of Employment, Interest and Money. The central insight was deceptively simple. In a normally functioning economy, what one person saves another person spends, and the system stays in balance. But when confidence collapses, everyone tries to save at once, spending falls across the entire economy simultaneously, businesses lose customers and lay off workers, those workers spend even less, and the whole thing spirals. Keynes called this the paradox of thrift: the behavior that makes perfect sense for any individual household, spending less and saving more when times are uncertain, becomes catastrophic when everyone does it at the same time. The market, in this situation, cannot fix itself, because the problem is not a price being set wrongly but a collapse in the total level of demand that the private sector alone cannot restore.

The solution Keynes proposed was that government had to step in as the spender of last resort, injecting money into the economy through public works, infrastructure investment, and direct employment programs, even if this meant running a deficit. The logic was that money spent by the government would circulate through the economy, each dollar paid to a construction worker becoming grocery spending which became income for the grocer which became spending somewhere else, multiplying the initial injection into a larger overall boost to demand. This became known as the multiplier effect, and it provided the intellectual foundation for the New Deal in the United States, the postwar welfare states of Western Europe. Keynesian economics fell out of fashion in the 1970s when inflation and unemployment rose simultaneously, a combination his models had not fully anticipated, and was replaced in many governments by a return to market-oriented thinking. But every time a major economy faces a serious downturn, and governments respond by spending heavily to cushion the blow, the instinct they are acting on is Keynesian, whether they use the word or not.

John Maynard Keynes

We hope you enjoyed today’s edition! If you did, feel free to share it on social media or forward this email to friends.

Until next time... A Little Wiser Team